#UTF16 TO UTF 8 CONVERTER CODE#

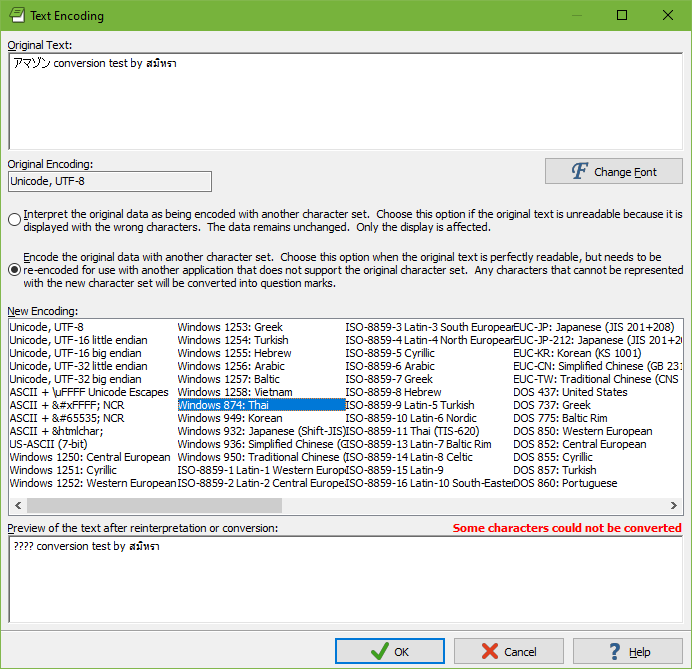

But you posted both towards the end of 2015, so I should mention that starting with SQL Server 2014 SP2, code page 65001 (which is UTF-8) is supported in bcp and BULK INSERT.įourthly, for anyone using SQL Server 2014 SP1 or older, the incoming data does need to be converted at some point. Thirdly, you don't mention either here or in the related question which version of SQL Server you are using. You need to use "char" as that is for 8-bit encodings, which is what UTF-8 is. "widechar" indicates UTF-16 LE, the 16-bit encoding used by NVARCHAR / NCHAR / NTEXT / XML. Secondly, using a DataFileType of "widechar" is incorrect for UTF-8. First off, please see my reply to your related question from another topic here, which covers the basics of UTF-8 / UTF-16LE, etc: BCP - unicode

#UTF16 TO UTF 8 CONVERTER INSTALL#

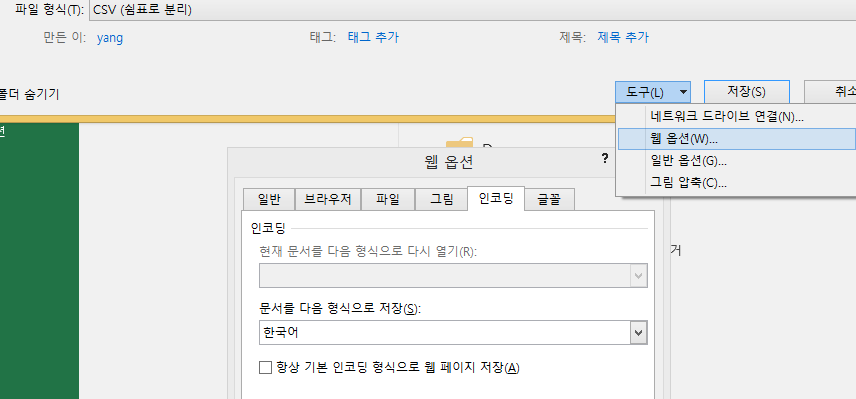

Without having to install or change anything on the client's server, how can I automate this conversion process, so that the bulk insert works properly? With (DATAFILETYPE = 'widechar',Firstrow = 1, ROWTERMINATOR = '') I tried the Bulk insert on an UTF-8 encoded file and it seems to work but I see extra characters in the first row, which puts in doubt the validity of loading a UTF8 file with bulk insert.įrom 'C:\Users\John_Doe\The_UTF8_File.csv' The problem is that the customer that we are dealing with doesn't want to enable xp_cmdshell. I found a great way to convert the file using powershell. We receive multiple CSV files which are in UTF-8 coding. but it doesn't seem to be that simple since I can't find it anywhere on the web. Without having to install or change anything on the client's server, how can I automate this conversion process, so that the bulk insert works properly? I tried the Bulk insert on an UTF-8 encoded file and it seems to work but I see extra characters in the first row, which puts in doubt the validity of loading a UTF8 file with bulk insert.īulk insert #tmp from 'C:\Users\John_Doe\The_UTF8_File.csv' with (DATAFILETYPE = 'widechar',Firstrow = 1, ROWTERMINATOR = '') Set = 'xp_cmdshell ''powershell.exe -command "get-content ' + + ' | out-file -filepath ' + + ' -encoding Unicode"'''Įxec problem is that the customer that we are dealing with doesn't want to enable xp_cmdshell (which can be understandable for some and not for others) I found a great way to convert the file using powershell: On a daily basis, We receive multiple CSV files which are in UTF-8 coding. As you all know the bulk insert / bcp commands are made to work with UTF-16le files.